Source: thehackernews.com – Author: .

May 19, 2023Ravie LakshmananArtificial Intelligence / Cyber Threat

Malicious Google Search ads for generative AI services like OpenAI ChatGPT and Midjourney are being used to direct users to sketchy websites as part of a BATLOADER campaign designed to deliver RedLine Stealer malware.

“Both AI services are extremely popular but lack first-party standalone apps (i.e., users interface with ChatGPT via their web interface while Midjourney uses Discord),” eSentire said in an analysis.

“This vacuum has been exploited by threat actors looking to drive AI app-seekers to imposter web pages promoting fake apps.”

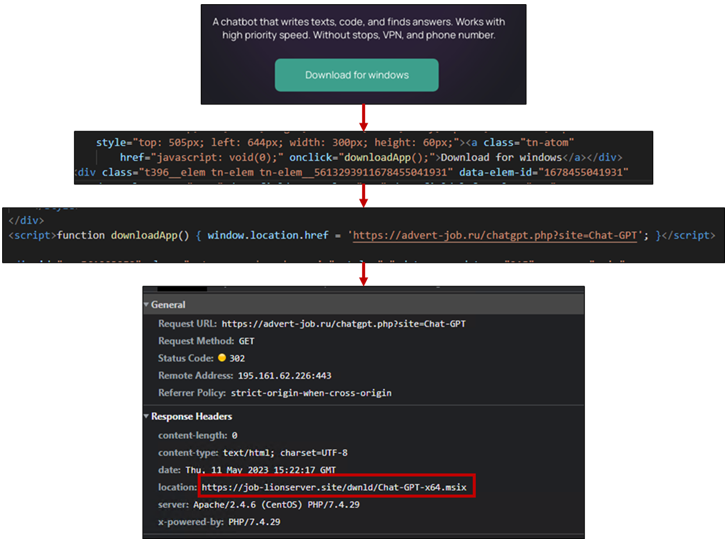

BATLOADER is a loader malware that’s propagated via drive-by downloads where users searching for certain keywords on search engines are displayed bogus ads that, when clicked, redirect them to rogue landing pages hosting malware.

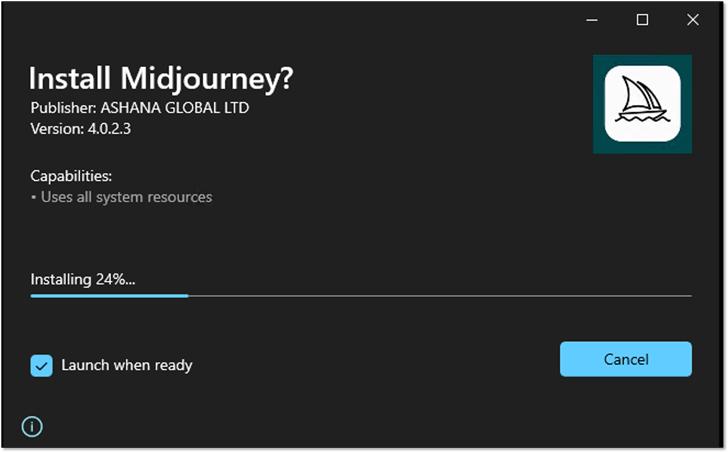

The installer file, per eSentire, is rigged with an executable file (ChatGPT.exe or midjourney.exe) and a PowerShell script (Chat.ps1 or Chat-Ready.ps1) that downloads and loads RedLine Stealer from a remote server.

Once the installation is complete, the binary makes use of Microsoft Edge WebView2 to load chat.openai[.]com or www.midjourney[.]com – the legitimate ChatGPT and Midjourney URLs – in a pop-up window so as to not raise any red flags.

The adversary’s use of ChatGPT and Midjourney-themed lures to serve malicious ads and ultimately drop the RedLine Stealer malware was also highlighted last week by Trend Micro.

This is not the first time the operators behind BATLOADER have capitalized on the AI craze to distribute malware. In March 2023, eSentire detailed a similar set of attacks that leveraged ChatGPT lures to deploy Vidar Stealer and Ursnif.

The cybersecurity company further pointed out the abuse of Google Search ads has fallen off from their early 2023 peak, suggesting that the tech giant is taking active steps to curtail its exploitation.

UPCOMING WEBINAR

Zero Trust + Deception: Learn How to Outsmart Attackers!

Discover how Deception can detect advanced threats, stop lateral movement, and enhance your Zero Trust strategy. Join our insightful webinar!

The development aligns with a broader wave of phishing and scam campaigns, wherein threat actors are attempting to cash in on the surging use of these AI tools to distribute malware and other bogus apps.

Security vendor Sophos, in a related research, outlined a set of ChatGPT-related fleeceware apps in the Google Play and Apple App Store — collectively dubbed FleeceGPT – that coerce users into signing up for unwanted subscriptions.

“Because fleeceware applications are designed to stay on the edge of Apple and Google terms of service and do not access private information or attempt to circumvent platform security, they are rarely rejected during review and are allowed into the app stores,” Sophos researchers Jagadeesh Chandraiah and Sean Gallagher said.

In recent weeks, Check Point, Meta, and Palo Alto Networks Unit 42 have warned of increasing fraudulent activity mimicking the ChatGPT service to harvest users’ credit card details, perpetrate credit card fraud, and steal victims’ Facebook account details via copycat chatbot web browser extensions.

Between November 2022 through early April 2023, Unit 42 said it detected a 910% increase in monthly registrations for domains related to ChatGPT.

The findings come weeks after Securonix uncovered a phishing campaign dubbed OCX#HARVESTER that targeted the cryptocurrency sector between December 2022 and March 2023 with More_eggs (aka Golden Chickens), a JavaScript downloader that’s used to serve additional payloads.

eSentire, in January, traced the identity of one of the key operators of the malware-as-a-service (MaaS) to an individual located in Montreal, Canada. The second threat actor associated with the group has since been identified as a Romanian national who goes by the alias Jack.

Found this article interesting? Follow us on Twitter and LinkedIn to read more exclusive content we post.

Original Post url: https://thehackernews.com/2023/05/searching-for-ai-tools-watch-out-for.html

Category & Tags: –

Views: 17